Beliefs, attitudes and public misperceptions

Here's a chart based on research Ben Baumberg, Kate Bell & I carried out for the charity Turn2Us last year http://www.turn2us.org.uk/PDF/Benefits%20Stigma%20in%20Britain.pdf. I thought it might be of interest in the context of the Royal Statistical Society's 'Perils of Perception' event today http://www.rssenews.org.uk/2013/07/rss-commission-new-research-into-publ... Polling for the RSS indicates that public beliefs about many important issues such as migration, teenage pregnancy and crime are wildly inaccurate. Benefit fraud, the subject of the chart, is overestimated by a factor of 34.

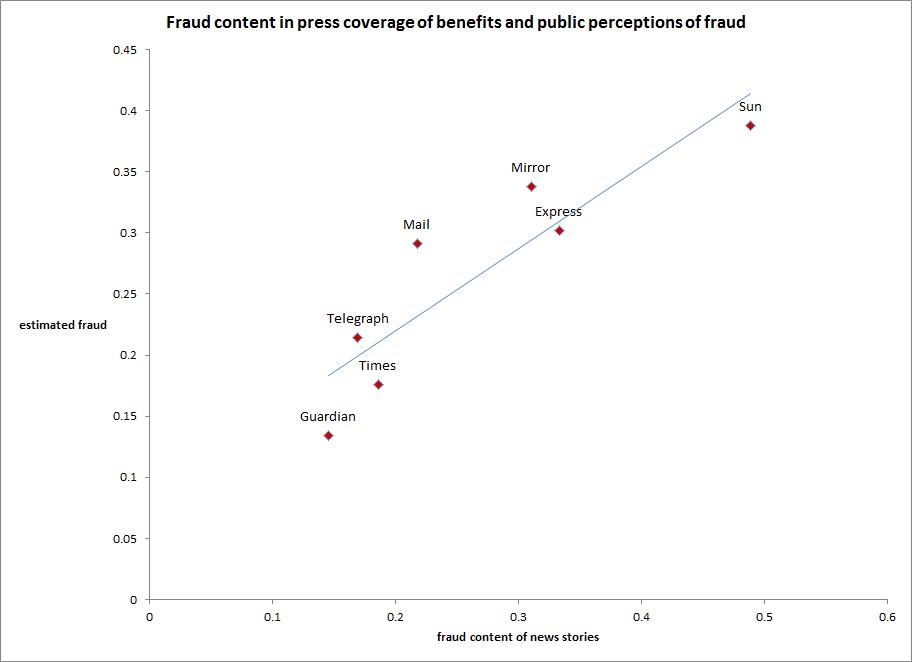

The chart shows how people's estimates of benefit fraud vary according to the level of fraud coverage in the newspaper they read. Is there a relationship between the two? Obviously there is: it's not mechanistic, but it's well approximated by the linear trend line. Reading papers which give a lot of coverage to benefit fraud is associated with believing there's a lot of benefit fraud. Note that readers of all titles are, on average, overestimating by a wide margin (the true value is 0.7%). But the steep slope of the trendline tells us that perceived fraud rises rapidly as people are exposed to more negative coverage.

This is the point at which I'm supposed to say something to the effect that 'correlation is not causality'. Indeed it isn't, but then causality is often a pretty fuzzy notion in social science. Best to just take the chart as descriptive. .

Still, it's difficult to be studiously neutral on what's going on here- even descriptive evidence inevitably prompts some theorising. For example, I have some reservations about this passage in Bobby Duffy and Hetan Shah's excellent New Statesman article on the RSS findings http://www.newstatesman.com/politics/2013/07/muslims-benefits-and-teenag... .'[O]ur misperceptions also reflect our concerns – and this is why any number of "myth-busting" exercises are bound to flounder. Our exaggerated estimates are at least as much an effect as a cause of our concerns. Academics call this "emotional innumeracy": we’re making a point about what’s worrying us, whether we know it or not.'

This seems to me to be leave some important questions open. Why /these/ concerns- for example, why are 'we' worrying about benefit fraud rather than low takeup of benefits or administrative error (both more important in cash terms)? If people form exaggerated beliefs about things like benefit fraud without outside prompting, then why do interest groups and politicians with anti-welfare views put so much effort into casting suspicion on claimants? For example, why does government routinely place statistics-based negative stories about claimants in the press whenever a controversial piece of welfare reform is being announced or voted on? And what did Grant Shapps think he was doing when he libelled 870,000 people former claimants of Employment Support Allowance http://www.guardian.co.uk/commentisfree/2013/apr/15/conservative-claims-... ?

This is not of course to say that autonomous underlying concerns aren't important drivers of opinion. Our report on benefit stigma was far from endorsing simplistic media distortion or elite manipulation theories of erroneous public beliefs. Behind polling results lies a complex interplay between attitudes, personal experience, second-hand information and beliefs. It is difficult, if not impossible, to separate out the roles played by these respective factors in generating opinion poll responses or support for particular policies (although we did our best in the report).

But there is one thing we can be sure of: politicians, pressure groups and politically aligned journalists act as if influencing peoples' estimates of key variables was an important persuasive strategy. In other words, they act as if they thought -to reverse Bobby and Hetan's phrase- that exaggerated estimates were at least as much a 'cause' as an 'effect' of concerns. That in itself should count as evidence towards the proposition. Personally, I would happily spend less time on 'mythbusting', if it wasn't for the insistent feeling that if it's worth someone's while to spread misinformation, it's worth others' while to try to set the record straight insofar as they can .